The global race to expand data capacity is colliding with two hard limits – land and power.

As AI workloads surge and cities strain to accommodate hyperscale growth, engineers are looking somewhere new for space and cooling: beneath the sea.

It sounds ambitious, even implausible. Yet underwater data centers are no longer science fiction. Microsoft proved the concept. China is now operating commercial-scale modules. And the results suggest that the ocean could be one of the most efficient environments ever built for computing.

Why Take Data Centers Underwater?

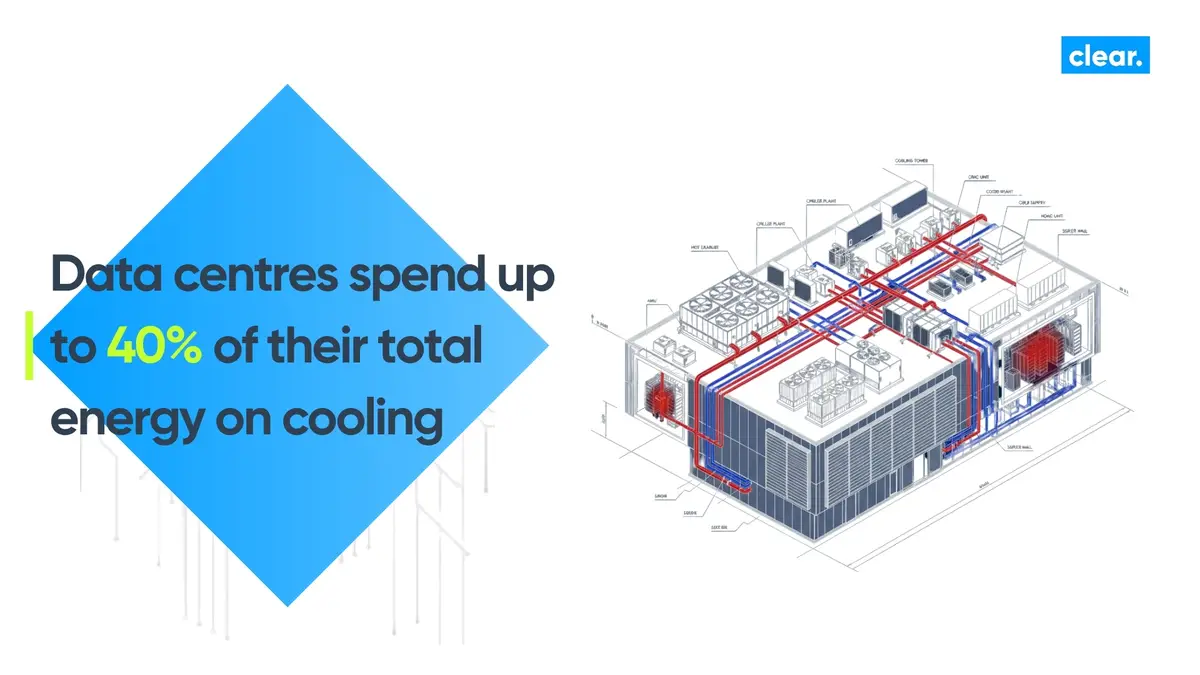

Conventional data centers spend up to 40% of their total energy on cooling. Every megawatt of compute load needs another megawatt of chilled air, pumps and compressors to keep equipment stable.

The ocean, by contrast, offers a natural and abundant heat sink. At depths of around 100 metres, water temperature remains consistently low and stable. That makes it possible to achieve 40–60% reductions in cooling power simply through passive heat transfer to the surrounding seawater.

For operators, the benefits are clear: less energy use, lower carbon footprint, and no need to compete for scarce land near urban or coastal hubs. For users, there’s another advantage, these installations can sit just offshore, supporting low-latency edge computing where population density and data demand are highest.

How it Works: The Engineering Beneath the Waves

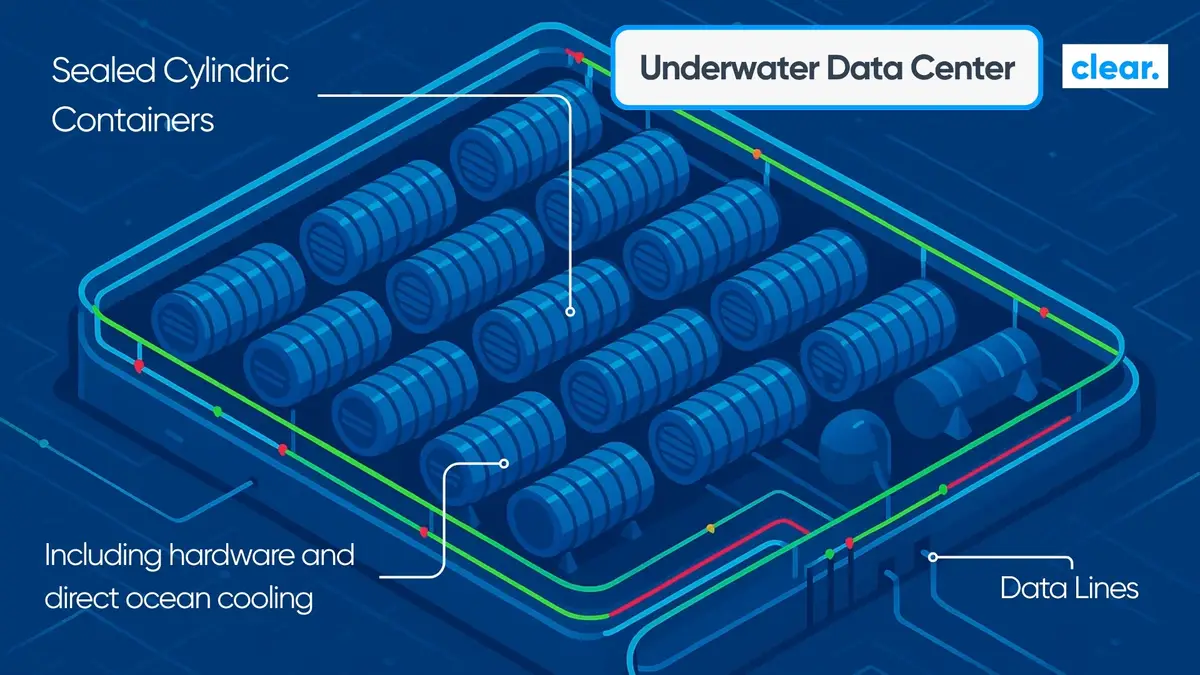

Underwater data centers are sealed, pressure-resistant steel hulls or pods designed to operate for years without human access. The interior atmosphere is often filled with dry nitrogen to prevent corrosion and condensation.

Servers are either immersed in dielectric fluid, which absorbs heat directly from the chips, or cooled by seawater piped through radiators at the back of each rack. In both models, the ocean itself becomes the cooling medium. There are no chillers, cooling towers or large HVAC systems — only convection and the vast thermal capacity of the sea.

The design challenges are significant. Each module must withstand deep-water pressure, salt exposure, marine growth and movement. Power and data are delivered through undersea cables. Maintenance is minimal, with entire modules retrieved only periodically for servicing or upgrades.

But where these systems work, they work remarkably well.

Proof in Practice: Microsoft and China

Microsoft’s Project Natick remains the most well-known and thoroughly documented trial.

The company deployed its first sealed data center pod in 2015, followed by a larger 12-rack unit sunk 117 feet underwater off Scotland’s Orkney Islands in 2018. The results were impressive: the servers inside were eight times more reliable than equivalent land-based systems, largely because the environment was controlled, unstaffed and free from mechanical vibration or temperature variation. Power and cooling efficiency were also notably improved, thanks to the cold seawater and proximity to renewable energy from Orkney’s tidal and wind sources.

(natick.research.microsoft.com)

Image Source Credit: itpro.com /Microsoft/Jonathan Banks

However, in 2024 Microsoft confirmed that Project Natick would not continue.

This decision was not driven by environmental concerns. Instead, the company cited operational and commercial factors — the complexity of maintenance, the challenge of upgrading sealed systems, and the difficulty of scaling such a niche infrastructure model into global operations. In short: the technology worked, but the business case did not.

(datacenterdynamics.com)

(itpro.com)

Even so, Natick remains an important milestone. It proved that underwater operation is both technically feasible and environmentally safe, with Microsoft reporting no measurable negative impact on marine life — and even noting that algae and fish had colonised the pod’s exterior.

(news.microsoft.com)

Meanwhile, China has taken the concept further.

In 2024, full-scale AI-optimised underwater data centers were deployed off Hainan Island, using modular construction and renewable power from nearby sources. Reports indicate electricity consumption around 30% lower than comparable land-based facilities. (scientificamerican.com) (interestingengineering.com)

Image Source Credit: CGTN /China Media Group

China’s approach shows that underwater deployments can scale rapidly when industrial backing and energy integration align. Entire modules are pre-assembled, tested, and then submerged, in a process that takes weeks rather than months. For regions constrained by power or real estate, this model could soon be part of mainstream capacity planning.

Sustainability and The Environmental Equation

From a sustainability standpoint, the appeal is strong. Deep-sea cooling can reduce the mechanical cooling load to below 10% of total facility energy use, cutting emissions and operating costs.

Because these sites sit offshore, they can also connect directly to renewable energy – wind, wave, tidal or floating solar – rather than drawing from terrestrial grids already under strain. Microsoft’s Orkney trial demonstrated exactly that, using 100% locally generated renewable power.

Importantly, environmental studies from Project Natick found no harmful impact on marine ecosystems. Heat from the modules dissipated quickly through currents, and biodiversity actually increased around the structure. These findings helped validate underwater cooling as an environmentally compatible approach.

The Practical Limits

The concept remains promising, but scaling it is not simple.

Maintenance access requires retrieving entire modules, which can mean downtime and cost. The engineering complexity and regulations such as seabed rights, marine safety, environmental permitting and cable landings all add another layer of challenge.

Microsoft’s decision to pause future builds highlights the difference between a successful experiment and a viable business model. The lessons, however, are invaluable. They prove that the physics and sustainability benefits are sound. The next question is who can make the logistics and economics work at scale.

What This Means for the Data Center Ecosystem

If underwater data centers move beyond the pilot stage, they will reshape more than cooling strategies. They will reshape project teams.

These builds sit at the intersection of data center engineering and marine infrastructure.

They require specialists in subsea cabling, corrosion-resistant materials, sealed-environment commissioning, and remote monitoring. Environmental and regulatory expertise will be critical. OEMs will need to adapt power and cooling technologies for submerged conditions.

For project owners, contractors and systems integrators, that creates a new delivery model and a new class of highly specialised talent.

The People Behind the Innovation

At Clear, we follow emerging technologies across the mission-critical projects from hyperscale builds and brownfield expansion to commissioning, automation and advanced energy integration.

Underwater data centers may still be experimental, but they rest on the same disciplines that underpin the projects we deliver every day: rigorous project planning, technical assurance, and safety-led commissioning.

The future workforce for these programmes will need hybrid expertise, data center specialists with experience in offshore or marine environments. Engineers who can understand both power and subsea systems. Project managers fluent in both MEP delivery and maritime regulation. Controls specialists capable of designing for sealed, unmanned modules.

These are not standard profiles, and they will not be found through conventional recruitment channels. They require deep sector fluency, international reach and an understanding of how new technologies intersect with proven engineering. That’s precisely the space Clear operates in.

Looking Ahead

Underwater data centers are unlikely to replace traditional facilities, but they point toward a future where sustainability is built into the physical environment and cooling is a priority.

For operators and contractors, the opportunity is to learn from these early programmes and prepare the teams, skills and partnerships that could one day deliver similar innovation.

Because whether it’s under the surface or above it, progress in the mission-critical world always depends on the same foundation, the right people, in the right place, at the right time.

Sources:

- Microsoft Project Natick – natick.research.microsoft.com

- Microsoft News – Project Natick Sustainability Findings

- Data Center Dynamics – Microsoft Confirms Project Natick is No More

- IT Pro – Why Microsoft Scrapped Its Project Natick Underwater Data Center Trial

- Scientific American – China Powers AI Boom with Undersea Data Centers

- Interesting Engineering – World’s First Commercial Underwater Data Center

- Brightlio – Underwater Data Centers Explained

- ITpro: itpro.com Project Natick

- CGTN: Chinas underwater Data Centers