By 2027, inference workloads will overtake training as the dominant AI requirement in Data Centers. That’s not a long-range forecast. That’s next year. And most operators are still hiring for the wrong workload.

Inference already accounts for roughly two thirds of all AI compute in 2026, according to Deloitte. The global inference market will more than double, from $113.5 billion in 2025 to $253.75 billion by 2030. McKinsey projects inference will represent more than half of all AI compute and 30 to 40% of total Data Center demand by the end of the decade.

The sector has spent 3 years building the workforce for AI training: large centralised GPU clusters, brute-force compute, massive power draws. That era isn’t ending, but it’s no longer the whole story. Inference is triggering a fundamental shift in facility design, power profiles and operational staffing. And the workforce composition it demands is structurally different. In a market where 51% of operators already struggle to find qualified candidates and only around 15% of applicants meet minimum job qualifications, this shift compounds an existing crisis.

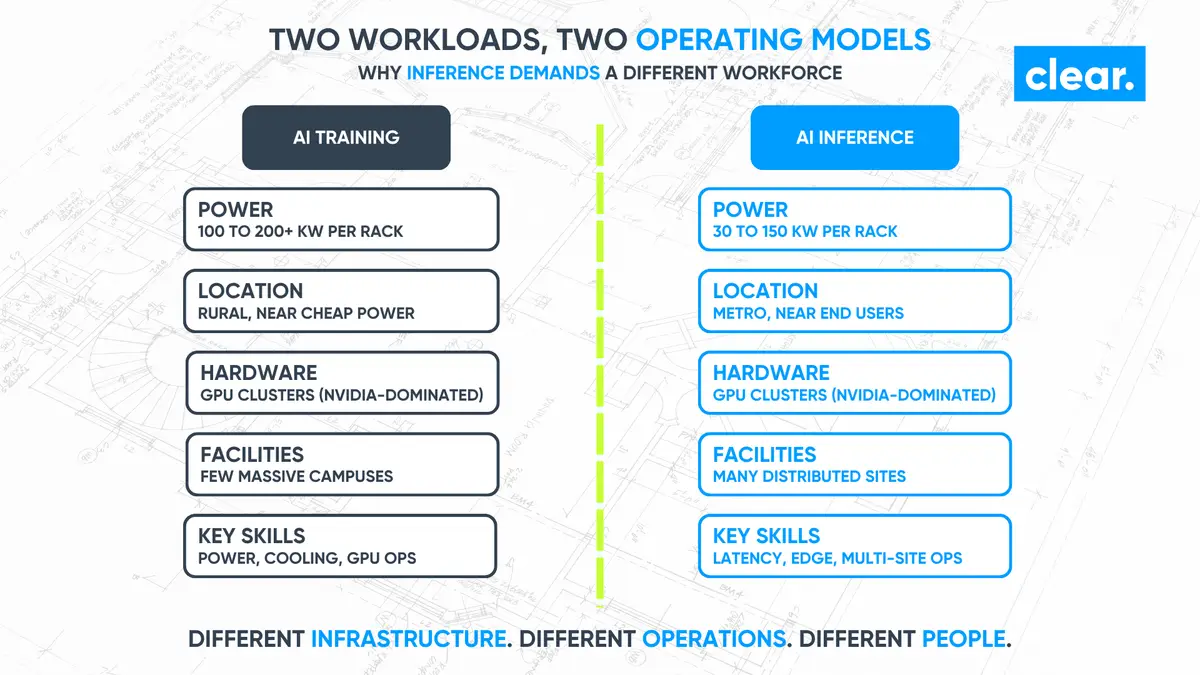

Inference-optimised environments need engineers skilled in real-time monitoring, edge integration and latency management. Not the same profile as the team that commissions a 200 kW-per-rack training cluster. Different infrastructure. Different operations. Different people.

Hiring managers who are still staffing for the training era are building teams for facilities that will look increasingly different from what they’re actually delivering. HR directors managing agency PSLs built for traditional Data Center roles are seeing diminishing returns. And C-Suite leaders signing off on inference programmes need to know: the talent strategy has to change with the technology strategy.

Two Workloads, Two Operating Models

Training builds AI models. It’s a brute-force compute problem: tightly synchronised GPU clusters, high-bandwidth interconnects, sustained power draws at 100 to 200+ kW per rack. In frontier systems, some racks are pushing toward 1 MW. These facilities are large, centralised, and built where land and grid capacity allow, regardless of proximity to end users.

Inference runs those models in production. Search, chatbots, recommendation engines, autonomous systems, enterprise automation. Each task is less compute-intensive than training. But in aggregate, the volume is enormous: billions of queries, every day, each demanding a response in milliseconds.

Different power profiles. 30 to 150 kW per rack, not 200+.

Different locations. Metro and near-metro, optimised for low latency.

Different hardware. ASICs and CPU-plus-accelerator configs, not just GPU clusters.

Different cooling. Lower density per rack, but across more sites with less headroom.

Training is a capital-intensive sprint. Inference is a revenue-generating marathon. The teams who deliver them should be composed differently.

How Inference Changes Workforce Composition

This is the shift most operators haven’t planned for. It’s not about hiring a few different specialists. It’s about redesigning the composition of your operational teams for a fundamentally different type of facility. Every unfilled role on a live programme has a direct cost: delayed commissioning, extended contractor cover, missed revenue from uncommissioned capacity.

When the facility type itself is changing, the cost of hiring the wrong profile is just as damaging as not hiring at all.

Clear has spent 9 years placing engineers into mission-critical Data Center environments, working exclusively within Data Center, power and cooling infrastructure. Every consultant recruits within this sector and no other. The profile shift we’re seeing for inference-optimised facilities is distinct from anything the training era required, and our cross-market network spanning operators, contractors and OEMs across three continents gives us early visibility of where that shift is heading.

From centralised depth to distributed breadth

Training facilities are concentrated campus environments. A small number of massive sites, each with teams who manage a tightly integrated, high-density stack. Inference will be distributed. More sites, in more locations, closer to end users.

That means more operations teams, across more geographies, each managing facilities that are smaller but more numerous. The team composition shifts from deep specialisation in one mega-facility to consistent operational capability replicated across a portfolio. In a sector where 40% of professionals are planning to leave their roles, citing burnout, limited career development and poor work-life balance, getting this composition right is also a retention question. The wrong team structure accelerates attrition.

Real-time monitoring, edge integration, latency management

These are the 3 defining skill sets of the inference era. In training, the engineering focus is power and cooling: get enough electricity to the GPUs, remove the heat, keep the cluster synchronised. In inference, the constraints are different. Round-trip time to the end user determines service quality. Workload volatility creates demand spikes that the infrastructure must absorb in real time. Edge deployments push compute out of centralised facilities entirely.

The engineer running an inference facility needs to think like a network architect as much as a mechanical or electrical specialist. BMS integration gets more complex. Monitoring systems need to track latency, throughput and hardware health simultaneously across multiple sites.

This isn’t a minor skills extension. It’s a different professional profile.

Then there’s hardware diversification. Training has been an NVIDIA-dominated ecosystem: CUDA, H100s, B200s, GB200 NVL72 racks. Inference opens the field. AMD Instinct, Google TPUs, AWS Graviton, custom ASICs from Meta, Groq, Cerebras, SambaNova. The operations team in an inference facility may be managing 3 or 4 different accelerator architectures in the same building. For hiring managers, that means recruiting for hardware flexibility, not hardware specialisation.

Where the Inference Workforce Will Come From

The distributed, network-centric, hardware-diverse nature of inference means the sourcing strategy has to be broader than what worked for training.

The most immediate route is cloud and network operations. Engineers who’ve managed distributed cloud infrastructure, CDN edge nodes or telecommunications network operations already understand latency-sensitive environments, multi-site management and heterogeneous hardware. The technical crossover to inference-optimised Data Centers is direct. What they need is mission-critical context: the operational discipline that comes from working in environments where downtime isn’t an SLA violation but a facility-level failure. Critically, the best of these candidates are passive. They aren’t on job boards and won’t respond to generic outreach. Reaching them requires a recruiter with deep, relationship-based networks that span both Data Center and adjacent infrastructure sectors.

Running alongside that is upskilling existing Data Center teams. The core disciplines still apply: power, cooling, physical security, and commissioning. What changes is the emphasis. More network awareness. More hardware diversity. More multi-site coordination. The operators investing in structured development now will have inference-capable teams before their competitors start recruiting for them.

And there’s the geographic challenge. Training facilities concentrated in rural locations with cheap power. Inference needs to be near population centres. That means recruiting in metro markets, competing with every employer in those cities for technical professionals, and often building Data Center teams in locations that haven’t historically had them. The Tier-2 talent challenge and the inference challenge are converging.

How Hiring Managers Should Be Preparing Today

The 2027 inflection point is 12 months away. The workforce decisions that determine whether your inference programmes are staffed correctly are being made now.

Audit your team composition against your workload trajectory. If inference is growing as a proportion of your capacity, your operations teams should be evolving to reflect it. Map the skills you have against what inference-optimised facilities actually require: real-time monitoring, edge integration, latency management, and multi-architecture hardware support. The gaps will be specific and addressable, but only if you identify them now.

Diversify your sourcing beyond traditional DC engineering. Cloud operations, network engineering, telecommunications. These adjacent fields produce professionals with directly transferable skills for inference environments. A specialist recruitment partner with networks across both Data Center and adjacent infrastructure can identify these candidates before they’ve thought about the transition.

Clear has made 160+ placements for operators and contractors including NTT, VIRTUS, Winthrop and Dornan, and 83+ placements across power and cooling OEMs including Anord Mardix and Airedale. That cross-sector network, spanning hyperscale operators, MEP contractors and critical power and cooling manufacturers, is what makes adjacent sourcing work. A generalist agency simply doesn’t have the technical vocabulary to screen these crossover candidates against the real demands of mission-critical environments.

And start the upskilling conversation with your existing teams. The engineer who commissioned your last training facility understands your standards, your clients and your commissioning processes. Developing them into inference-ready capability is faster and more reliable than external hiring alone. It’s also the strongest retention lever in a market where 40% of professionals are planning to leave. A third of the technical workforce is at or nearing retirement age. Organisations that invest in structured development now will have inference-capable teams before their competitors start recruiting for them.

The Inference Era Is a Workforce Redesign

The 2027 crossover from training-dominant to inference-dominant isn’t a technology trend to watch. It’s a workforce planning deadline. The facilities being commissioned over the next 2 to 3 years will run inference workloads for a decade or more. The teams that operate them need to be composed for that reality, not for the training era that preceded it.

Training built the models.

Inference is where the value is delivered.

The workforce composition needs to shift with it.

Clear recruits across Data Center, power and cooling infrastructure from London, New York and Dubai. As inference reshapes where and how facilities are built and operated, the talent requirements are changing with them. We’ve spent 9 years building the network and the sector knowledge to help operators get ahead of that shift.

clear-er.com