The Wall Street Journal reported in early May that Google is in talks with SpaceX to launch its first orbital Data Center prototypes, part of an internal programme called Project Suncatcher, targeted for early 2027. The story moved space stocks. It also confirmed something the sector has been saying out loud for two years.

We’ve run out of room.

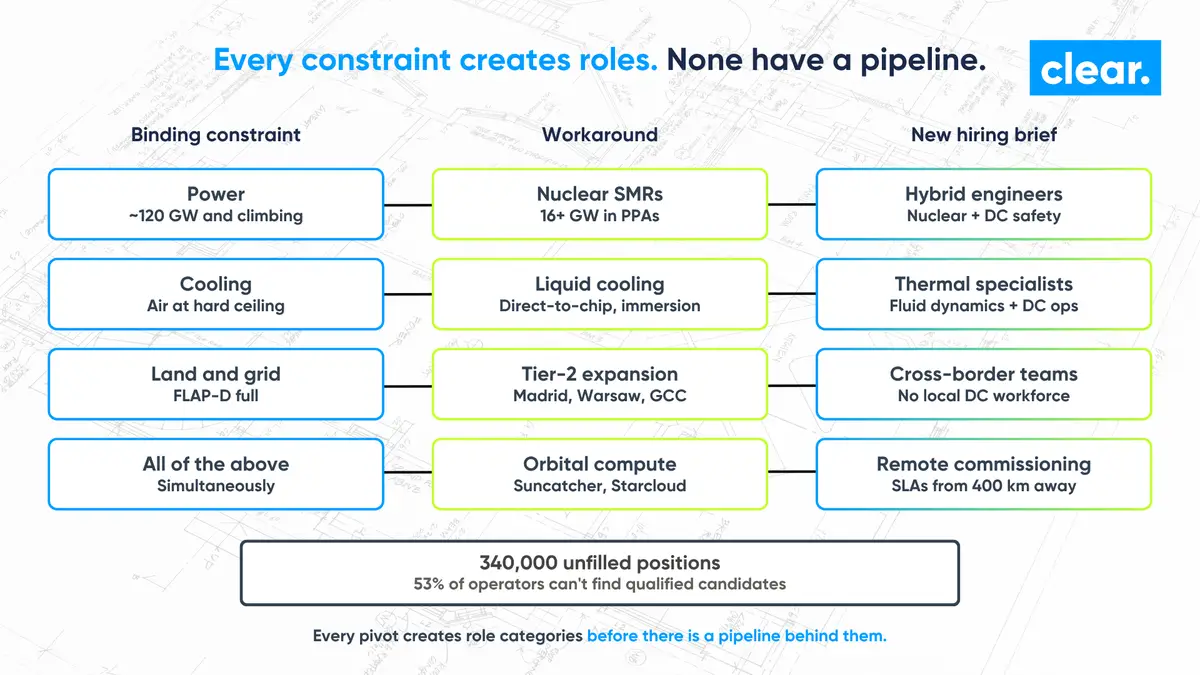

Not capital. Not silicon. The physical inputs to scaling AI compute, the power, the land, the cooling water, the grid headroom, the people, have all moved from “manageable” to “binding” inside 24 months. The conversation in our consultant calls has tracked the shift in real time. Operators stopped asking how to compete on salary. They started asking how to commission capacity at all.

If you read Suncatcher as a moonshot, you missed the point. It’s the latest entry in a chain of responses to the same constraint, and each one in that chain has rewritten the hiring brief.

The orbital push isn’t one company

Google’s talks with SpaceX are the highest-profile entry in what’s now a small but real category.

Starcloud, the Y Combinator graduate that put an Nvidia H100 into orbit on Starcloud-1 in November 2025, raised $170 million in March 2026 at a $1.1 billion valuation. Its follow-on satellite is built around Nvidia’s Blackwell B200 with 100 times the power generation of the first. Axiom Space and Spacebilt launched the first two orbital Data Center nodes on 11 January 2026, with a 2027 plan to bring optically interconnected infrastructure to the ISS. Lonestar Data Holdings sent its Freedom payload to lunar orbit and is targeting commercial service in low Earth orbit in Q4 2026.

This isn’t speculative. There are payloads on orbit today. There is venture capital priced in. And there is a customer in Google publicly negotiating launch capacity.

The question worth asking isn’t whether it works. It’s why so many serious operators have decided that “build it in space” is a sensible answer at all.

The constraint stack on Earth

The numbers explain the move. Global Data Center critical power is on track to nearly double between 2023 and 2026, reaching 96 GW. US demand is expected to hit 76 GW this year, up from roughly 50 GW in 2024. AI workloads are now driving more than 40% of that growth.

The grid hasn’t kept pace. Interconnection queues in several US and European regions have stretched to between 5 and 10 years. Ireland’s connection lead times have made new builds in Dublin commercially uncertain. Texas has effectively told operators to bring their own generation. Northern Virginia is rationing.

Water is the next constraint after power. Community opposition is the constraint after that.

When you’re at 96 GW and climbing, with a grid that can’t reliably deliver another gigawatt for the better part of a decade, you start looking at any input that’s still abundant. Sunlight in orbit is one of them. So is unused vacuum for thermal rejection. Suncatcher’s pitch isn’t really “compute in space.” It’s “power and cooling that someone hasn’t already booked.”

The on-Earth answers came first, and they all hit talent

Before anyone seriously launched a Data Center, hyperscalers tried every option on this planet. Each one created a hiring brief that the sector wasn’t ready to deliver against.

Meta locked in up to 6.6 GW of nuclear capacity. Amazon committed $50 billion to a partnership with X-energy for 960 MW of small modular reactors. Google’s 615 MW PPA enabled the restart of a previously decommissioned plant. AWS and Talen Energy secured a 17-year, 1.92 GW PPA at Susquehanna in Pennsylvania. By the end of 2024, US nuclear PPAs tied to Data Center demand had crossed 16 GW.

Each of those announcements quietly created demand for hybrid engineering profiles that didn’t exist in the previous hiring cycle. People who understand nuclear safety culture and mission-critical Data Center operations. The intersection of those two communities is small. The pipeline isn’t really there yet.

Liquid cooling tells the same story. Direct-to-chip and immersion deployments are now standard at the densities AI training rigs demand, and they’re displacing two decades of air-cooling skill. The thermodynamics expertise that used to sit in semiconductor fabs and motorsport is suddenly in hyperscaler org charts.

Tier-2 expansion is the same again. Hyperscalers are now building in markets that don’t have a Data Center workforce. The recruitment problem in Tier-2 isn’t competition for talent. It’s the absence of any local talent pool to compete for.

Every binding constraint forces a workaround. Every workaround creates roles. None of those roles have a finished pipeline behind them.

The talent verdict the sector is still avoiding

The aggregate numbers are sobering. 340,000 Data Center positions are projected to remain unfilled in 2026 according to Bureau of Labor Statistics analysis quoted across industry reporting this year. 53% of operators in the Uptime Institute’s 2024 Global Data Center Survey say they’re struggling to find qualified candidates, up from 38% in 2018. Commissioning specialists, the people who decide whether a billion-pound campus delivers on schedule, take more than 75 days on average to place.

The pattern across the constraints described above is consistent. Every time the sector pivots to solve a power, cooling, or siting problem, it creates a hiring brief that needs people the industry hasn’t grown yet.

Nuclear-coupled campuses need engineers fluent in two safety regimes.

Liquid-cooled AI clusters need thermal specialists who understand workload-aware design, not just plant-room HVAC.

Orbital prototypes will need people who can write operational SLAs for sites that physically cannot be visited, and commission payloads from 400 kilometres away.

None of those roles existed when most of the industry’s job specifications were written. Generalist recruitment, which depends on keyword matching against established job titles, will produce CVs that look right and candidates who can’t actually do the work. We’re already seeing it on MEP design briefs that should have been straightforward.

The shortage isn’t going to be solved by paying 25 to 30% above market, although the market is already doing that. It will be solved by the firms that have been talking to the engineers behind these technologies for years, not weeks.

What it means for the next 18 months

If you’re planning hiring for the next 18 to 36 months, the operational implication of Suncatcher, Starcloud, the SMR PPAs and the liquid cooling rollout is the same. The sector will keep pivoting to solve its constraint problem. Each pivot will create role categories before there is a pipeline behind them. Time-to-hire, time-to-shortlist, and shortlist quality are about to matter more, not less.

For operators, contractors and OEMs, two things are worth doing now. The first is workforce planning that anticipates the next pivot rather than the last one. The second is being honest about which recruitment partners genuinely have access to the engineers building this, and which are still working from a generalist database.

The infrastructure race left the atmosphere this year. The talent problem has been off-grid for a lot longer.